CRM lead scoring in B2B assigns numeric values to leads or accounts based on ICP fit and buying intent. Its purpose is to make SDR prioritization systematic rather than subjective.

When built correctly, the model determines which accounts get routed, sequenced, and worked immediately. When it fails, SDR time is wasted and pipeline quality drops.

In Short: A CRM lead scoring model connects ICP fit and behavioral intent to a numeric threshold.

That threshold drives routing, sequencing, and SDR prioritization automatically.

When the score is aligned to your ICP, pipeline quality improves. When it is not, activity increases, but revenue does not.

This guide is for RevOps leaders, SDR managers, and sales ops teams who want to build or fix a CRM lead scoring system that actually works in practice, not just on paper.

What CRM Lead Scoring Means in a B2B Environment

CRM lead scoring assigns a numeric value to an account that reflects how sales-ready it is. That score determines whether the account should be worked now, nurtured, or kept out of active outreach.

In B2B, the score is built from three core inputs.

1. Firmographic Fit

Does the company match your ideal customer profile?

- Industry

- Company size

- Revenue range

- Location

- Business model

If the company does not match your ICP, the score should reflect that immediately.

2. Technographic Alignment

Does the company use tools or infrastructure that indicate readiness or compatibility?

- CRM platform in use

- Sales engagement tools

- Marketing automation systems

- Data enrichment providers

- Relevant integrations

In many B2B SaaS models, technographic alignment predicts conversion more accurately than industry alone. A company using the right stack is often operationally ready to buy.

3. Behavioral Intent

Is someone at the company actively showing buying signals?

- Pricing page visits

- Demo requests

- High-value content downloads

- Email engagement

- Product interaction

Intent without fit is noise.

Fit without intent is a prospecting target, not a sales-ready account.

Both must work together for the score to mean anything.

What Account Prioritization Actually Means

Account prioritization is not sorting a list manually.

It means:

- The CRM surfaces high-scoring accounts automatically

- Routing logic assigns them to the correct rep

- The correct sequence launches

- SDRs work from a ranked queue

If the score does not trigger routing or outreach, it is just a number stored in a field.

Most CRM lead scoring models fail not because the math is wrong, but because the score is disconnected from action.

Understanding this makes it easier to see where scoring systems typically break down.

Why Most CRM Lead Scoring Models Fail

The failure patterns in CRM lead scoring are consistent across most B2B teams. They’re not random. Here’s what actually goes wrong.

Scoring rules that were set once and never touched. Most teams build their CRM scoring rules during an implementation project and never revisit them. The ICP shifts. New segments emerge. The market changes. But the logic stays frozen. Accounts that no longer fit keep getting surfaced. High-fit accounts get missed.

No clear ICP to score against. If your team hasn’t agreed on the specific attributes that define your best customers, any score you assign is just a guess. The model reflects what someone assumed was important, not what actually predicts conversion.

Stale data running the scoring logic. CRM data degrades quickly. Job titles change. Companies restructure. Contacts move on. A scoring model running on outdated information is scoring a version of reality that no longer exists. Data freshness isn’t a nice-to-have: it’s what makes the model reliable.

One score is applied to every type of account. Enterprise accounts and SMB accounts don’t behave the same way. A VP of Sales at a 500-person SaaS company and a founder at a 10-person startup are not the same buyer. When there’s no segmentation logic, the model flattens real differences into a meaningless average.

Scores that don’t trigger any action. Even a well-built score becomes useless if the routing logic downstream is broken. If a high-scoring account doesn’t automatically get assigned to the right rep or launch the right sequence, the number just sits in the CRM doing nothing. Routing logic is what turns a score into action.

Sales and marketing defining “ready” differently. Marketing is optimizing for MQL volume. Sales is optimizing for meeting quality and SQL conversion. When these two teams don’t agree on what a sales-ready account looks like, the scoring model becomes a political compromise rather than an operational tool. It satisfies a dashboard without improving pipeline quality. One of the clearest signs of this misalignment is when MQL numbers look healthy but SQL conversion rates keep falling.

Fixing these issues doesn’t require a new CRM. It requires building a B2B lead scoring model on a set of clear, agreed-upon operational rules.

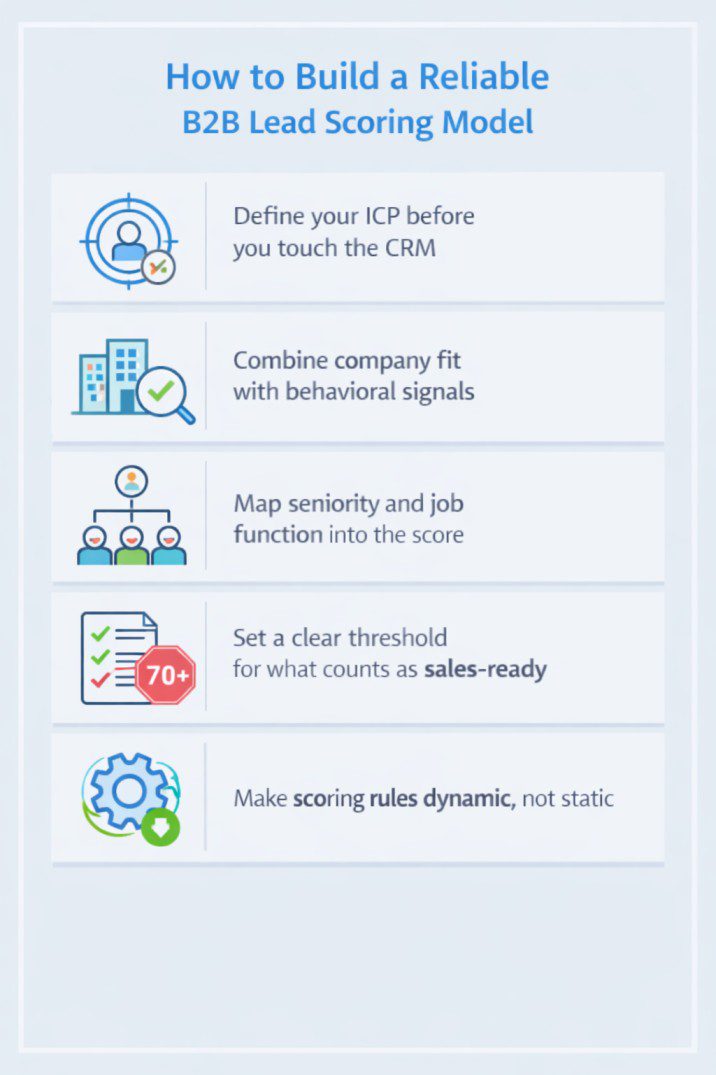

How to Build a Reliable B2B Lead Scoring Model

A solid B2B lead scoring model is built in layers. Each layer adds clarity and reduces noise. Here’s how to approach it practically.

Define your ICP before you touch the CRM. Your ideal customer profile is the foundation of every scoring decision. Pull your last 50 closed-won deals and look for what they had in common: industry, company size, revenue, tech stack, and who was in the buying committee. Document it. Get both sales and marketing to agree on it. Without this step, everything built on top of it is guesswork.

Combine company fit with behavioral signals. Score accounts on two dimensions, not one. Company fit tells you if they’re the right type of business. Behavioral signals tell you if they’re actively interested. Use both to determine whether an account is ready for outreach now or just worth monitoring.

Map seniority and job function into the score. Not every contact at a target account is equally valuable. A Director of Revenue Operations matters more than a Marketing Coordinator if you sell a RevOps tool. Build seniority and function into your scoring logic. As a starting point, C-suite and VP level in a relevant function might score 30 points, Director level 20, Manager level 10, and individual contributors 5. Adjust based on what your historical data shows about your actual buyers.

Set a clear threshold for what counts as sales-ready. This is where most teams stay vague. You need a specific number, not a range. If your max score is 100, decide that 70 and above is sales-ready, 40 to 70 goes into nurture, and below 40 stays out of active sequences. The exact numbers matter less than having numbers that everyone has agreed on.

Make scoring rules dynamic, not static. Set your CRM to recalculate scores automatically, triggered by new activity, enrichment updates, or a regular scheduled refresh. This keeps scores current without anyone having to maintain them manually. Most modern CRMs support this out of the box. The setup takes effort once. The alternative is a model that decays silently every week.

Add a scoring formula as a working reference.

Here’s a simple example of how scoring components can be weighted:

| Scoring Factor | Points |

|---|---|

| ICP industry match | 20 |

| Company size within target range | 15 |

| Decision-maker contact present | 30 |

| Visited pricing or demo page | 20 |

| Engaged with 2+ content pieces | 15 |

| Total possible | 100 |

Sales-ready threshold: 70+. Adjust weights based on what your closed-won data tells you.

A real example of this working: a SaaS company running outbound to mid-market HR teams rebuilt their scoring model to weight decision-maker seniority at 30 points and pricing page visits at 20. Within one quarter, their SDR-to-meeting conversion rate improved by 34% because reps stopped reaching out to companies where only junior contacts had engaged.

With the model designed, the next challenge is making it run consistently inside your CRM, not just exist as a documented plan.

Operationalizing CRM Lead Scoring Inside Your CRM

A good scoring model is only as useful as its day-to-day operation. The system needs to run automatically, consistently, and without relying on manual effort to keep it honest.

Make sure every account gets scored the same way, regardless of how it entered. Whether a record came in through an inbound form, a list upload, or an SDR manually adding it, the scoring logic should apply the same way every time. This requires clean field mapping and workflows that trigger scoring at the point of record creation, not as a cleanup job later.

Automate routing when an account hits the sales-ready threshold. The moment an account crosses your score threshold, the CRM should automatically assign it to the right rep based on territory or segment, enroll it in the right outreach sequence, and create a task with context on why it scored highly. Manual routing adds delays and creates inconsistency. Automation removes both.

Keep your segment definitions stable. If segment rules change frequently, your SDRs will stop trusting the queue and start re-evaluating every account themselves, which kills the point of scoring entirely. Define your segments, document them clearly, and only revisit them on a structured schedule tied to pipeline reviews.

Connect enrichment tools to keep data fresh automatically. Tools like Pintel.AI, Clay, Clearbit, ZoomInfo, or Apollo can update company data and contact information on a recurring basis. When those fields feed directly into your scoring logic, the scores update automatically as the data changes. This solves the data freshness problem without creating extra work for your team. Most CRMs cannot maintain accurate scoring without some form of external enrichment. The data inside the CRM alone decays too quickly.

The goal is zero manual research before outreach. If SDRs are routinely checking LinkedIn to verify job titles or Googling companies to confirm headcount before deciding whether to reach out, the scoring model isn’t doing its job. When an account surfaces in the queue, the rep should have enough context from the CRM alone to make a confident decision. Manual research reduction is one of the clearest signals that a scoring system is actually working.

When these pieces are in place, the scoring model stops being a data exercise and starts producing real results across the sales team.

What Happens When CRM Lead Scoring Works

When a CRM lead scoring system is running well, the effects are visible across the entire GTM motion. Here’s what changes.

SDRs spend time on better accounts. Instead of working through a flat list and hoping something connects, SDRs are working a queue where every account has already cleared a meaningful threshold. The ratio of real conversations to total touches goes up. Meeting conversion rates improve. Time isn’t wasted on accounts that were never going to buy.

Before: SDR works 200 accounts with no clear prioritization. Spends 40% of time on low-fit accounts. After: SDR works a scored queue of 60 accounts. Spends 90% of time on accounts that match the ICP and have shown intent.

CRM data quality improves as a side effect. When scores depend on clean, structured data, the whole team becomes more careful about data quality. Fields get filled in properly. Duplicate records create problems that surface quickly and get fixed. The scoring model creates a natural incentive for data discipline that no internal policy ever quite manages to achieve.

Prioritization becomes consistent and defensible. When a rep asks why they’re working a particular account, the answer is in the CRM. When a manager reviews the pipeline, the account mix reflects a clear lead qualification framework, not individual rep instinct. This makes coaching easier and pipeline conversations more productive.

Pipeline becomes more predictable. Fewer outreach attempts on low-fit accounts means less capacity wasted on work that won’t convert. Because the scoring model is aligned to your ICP, the accounts entering the pipeline look more like your best historical customers, which makes forecasting more reliable. Revenue predictability starts with scoring discipline.

These outcomes are achievable consistently when the model is built and operated correctly. The remaining question is whether your current model is working or needs a rethink.

When to Rethink Your CRM Lead Scoring Framework

Use this checklist to assess whether your current setup needs a structural review. If three or more of these apply, the model needs more than a small fix.

- SDRs regularly skip or re-rank the accounts the CRM gives them

- Scoring rules haven’t been updated in more than six months

- Sales and marketing don’t agree on what a sales-ready account looks like

- Contact seniority and job function aren’t factored into the score

- Accounts score highly but consistently fail to convert past the first meeting

- Enrichment data isn’t connected to scoring: reps are doing manual research

- Routing logic requires manual intervention more than 20% of the time

- Your ICP has changed since the scoring model was last built

- Pipeline quality has declined even though MQL volume has stayed flat or grown

- The scoring model isn’t documented or understood by the current RevOps team

A review doesn’t mean rebuilding your CRM from scratch. In most cases it means redefining the ICP, updating the scoring weights, connecting enrichment to the scoring fields, and documenting clear threshold logic for routing. The work is straightforward and the impact on SDR productivity and pipeline quality shows up quickly.

CRM lead scoring is not a one-time setup. It’s an operational layer that needs to evolve with your market, your ICP, and your GTM strategy. Teams that treat it as a configuration task will keep seeing the same problems: high activity, low quality, unpredictable revenue. Teams that treat it as an ongoing discipline build a compounding advantage over time.

Wrap-Up

CRM lead scoring in B2B is not about assigning points for the sake of structure. It is about creating a clear, shared definition of what a sales-ready account looks like.

When your scoring model is aligned to your ICP, refreshed with accurate data, and tied directly to routing logic, SDR prioritization becomes consistent. MQL to SQL handoffs become cleaner. Pipeline quality becomes easier to trust.

If your team is debating which accounts to work, manually re-ranking CRM lists, or seeing strong MQL numbers but weak SQL conversion, the scoring model likely needs attention.

Review it regularly. Tie it to real conversion data. Keep it dynamic.

Because in B2B sales, prioritization drives performance, and CRM lead scoring is what makes prioritization systematic instead of subjective.

Frequently Asked Questions

What is CRM lead scoring in B2B?

CRM lead scoring in B2B is a structured method of assigning numeric scores to leads or accounts based on ICP fit and behavioral intent to identify sales-ready accounts.

What is the difference between lead scoring and account scoring in B2B?

Lead scoring evaluates individual contacts, while account scoring evaluates the entire company. In B2B sales, account scoring is often more accurate because buying decisions involve multiple stakeholders.

How does CRM lead scoring connect MQL and SQL?

CRM lead scoring creates a shared numeric threshold between Marketing Qualified Leads (MQLs) and Sales Qualified Leads (SQLs), improving handoffs and increasing SQL conversion rates.

How often should a B2B lead scoring model be updated?

A B2B lead scoring model should be reviewed at least every six months, or whenever your ICP, market segment, or SQL conversion rates change.

What score makes a lead sales-ready in CRM lead scoring?

Many B2B teams set sales-ready thresholds at 70 out of 100, but the exact score depends on your ICP alignment and scoring weight distribution.

What is the difference between rule-based and predictive lead scoring?

Rule-based lead scoring uses predefined criteria inside the CRM, while predictive lead scoring uses historical conversion data and machine learning to forecast sales readiness.